For Software Testing Passionates...

Software Testing, Automation Testing, Manual Testing, Black Box Testing, Yellow Box testing, Green Box Testing, Test Scenarios, Test Cases, Test execution, Test Log report, Bug Report, Documentation

Java Programming Questions and it's Solution

1.Write a program for Fibonacci Series:

Program:

import java.util.Scanner;

public class Practice {

//Write a program for Fibonacci Series

//This is a program where each numbers are summation of previous two numbers like

public static void main(String args[]) {

Scanner sc = new Scanner(System.in);//Here Scanner class is used to take inputs from user

long x = 0;

long y = 1;

long z;

System.out.print("The maximum value entered by you is: ");

int num = sc.nextInt();

System.out.println("The maximum value entered by you is: " + num);

System.out.println("Expected Fibonacci series is: ");

System.out.print("\t" + x);

System.out.print("\t" + y);

for (int k = 2; k <= num; k++) {// Since the value at position 0 and 1 is already printed hence in for loop we are starting from 3rd position directly

z = x + y;//Since each value is the summation of it's previous two positions

System.out.print("\t" + z);

x = y;//As after each iteration position of x will be replaced/changed by y

y = z;//As after each iteration position of y will be replaced/changed by z

}

}

}

Tips while writing test cases

Below are the general tips to write test cases:

- Every Test Case must start with verify/validate/check

- Test case must cover positive and negative test cases both

- Test case must be traceable via module or testing topics

- Test case must specify what a tester has to perform and the response of the software

- Test case must give the consistent result i.e. different testers should not get the different result

- Test case must be re-usable

- Test case must be divided into sub test cases if there are more than 15 steps or so.

- Test case must have the defect id, if it is being failed

- Test cases prepared by the testers must be reviewed by the test lead

- Test cases must be approved by the customer site people.

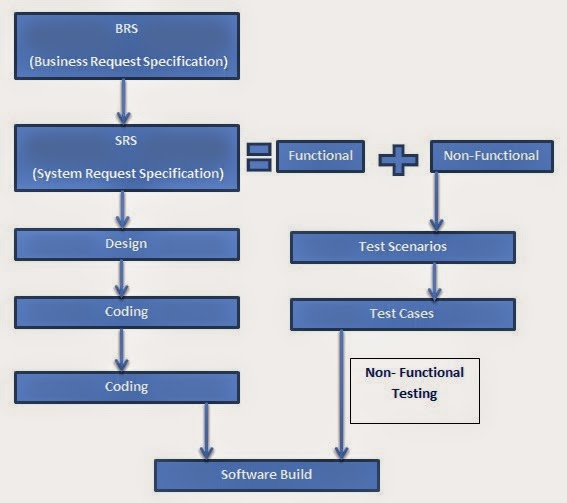

Non-Functional Test Design

Non-Functional specification based test design starts only after the completion of the test scenarios and test cases writing (using functional specifications, Use cases or screen of the Software Under Test). In order to prepare the non-functional test scenarios, testers depend on the non-functional specifications in the system request specifications.

|

| Non-Functional Specification Based Test Design |

From the above diagram, It is clear testers read the non-functional specifications from SRS and then they prepare the test scenarios for- Usability, Performance, Security, Data Volume, Intersystem, Hardware Configuration, Installations, Multilinguality etc. Generally these test scenarios cover the following points:

- Verify spelling of labels in every screens of SUT

- Verify labels int cap in every screens of SUT

- Verify color uniformity throughout the screens of SUT

- Verify font-type uniformity throughout screens of SUT

- Verify font-size uniformity throughout screens of SUT

- Verify alignment of controls uniformity throughout screens of SUT

- Verify uniformity in the spacing among controls throughout screens of SUT

- Verify correctness of functionality grouped controls throughout screens of SUT

- Verify meaning of labels throughout screens of SUT

- Verify correctness of tool tips throughout screens of SUT

- Verify symbol of the icon to match with the provided functionality throughout screens of SUT

- Verify shortcuts throughout screens of SUT

- Verify abbreviations throughout screens of SUT

- Verify meaning of error messages throughout screens of SUT

- Verify OK and Cancel like buttons throughout screens of SUT

- Verify system menu appearance throughout screens of SUT

- Verify existence of scroll bars throughout screens of SUT

- Verify status bar or progress bar throughout screens of SUT

- Verify format of Date, Time and visibility in screens of SUT

- Verify help documents of a software in SUT

Above points are also termed as check list.

Test Design

When the test planning is completed, testers receive this test plan document. With this testers also get System Request Specification document sometimes along with the Business Request Specification document. In case of lack of training testers study these documents to have a good grasp/domain knowledge on the application or current project or the product. When testers understand the documents properly they go for preparation of Test Design document which are of two types:

1) High Level Test Design

2) Low Level Test Design

High Level Test Design

This is the first step in preparation of the test design. In this, testers are responsible for the preparation of the Test scenarios for the relevant modules in the software under test. In order to create the test scenarios, testers are given either of the below:

a) Functional Specification

b) Use Case

c) Screen/Mock-up

d) Non Functional Specification

However preparation of the test scenarios will be common for either/all of the above 4 styles. It includes the below three parts/sections:

1. BVA (Boundary Value Analysis)

2. ECP (Equivalence Class Partition)

3. Decision Table

2. ECP (Equivalence Class Partition)

3. Decision Table

- Here Boundary Value Analysis deals with the size/range of the particular field (inputs and outputs of the application/software/product) like this filed should take minimum 4 characters and maximum 15 characters.

- Here ECP deals with the Type/format of the inputs to the fields like this field (inputs and outputs of the application/software/product) should only characters/special characters/Blank spaces etc. or it should not allow numeric values

- In 'Decision Table' we mention the expected output/outcome for the initial/previous valid and invalid fields of different combinations.It is used to define the positive and negative inputs to validate variations of the functionalities in the software under test.

Orthogonal Arrays- In this concept the repetition of decisions in the decision table is avoided.

Low Level Test Design

When the test scenarios writing is done, testers enhance(here enhancing scenarios means breaking it into further parts ) those test scenarios into test cases. Test scenarios just tell us about the specific test condition but the test cases tells about the step by step procedure to test the scenarios. In general, testers follow IEEE-829 format for writing the test cases.

Format of IEEE-829

1) Test Case Id- This is a unique number or name or sometimes combinations of number and name both which is used for reference

2) Test Case Name- This is the name of the relevant test scenario

3) Feature/Module- This is the name of the parent module for the relevant test case.

4) Priority- This denotes the importance level of the relevant test case like below:

(a) High (Priority- P0) [It is related to the Functional Part]

(b) Medium (Priority- P1) [It is related to the Non-Functional Part (excluding usability)]

(c) Low (Priority- P2) [It is related to the Usability]

5) Test Suite Id- It is the id for the parent of the niche test cases or we can say this is the name of the batch in which there are more than one test case.

6) Test Set-up- This represents the required conditions to follow the above 5 steps and to start the test execution.

7) Test Environment- This represents the required software and hardware in order to run the test cases.

8) Test Procedure- This is the step by step procedure in order to run the current test case(s).

Low Level Test Design

When the test scenarios writing is done, testers enhance(here enhancing scenarios means breaking it into further parts ) those test scenarios into test cases. Test scenarios just tell us about the specific test condition but the test cases tells about the step by step procedure to test the scenarios. In general, testers follow IEEE-829 format for writing the test cases.

Format of IEEE-829

1) Test Case Id- This is a unique number or name or sometimes combinations of number and name both which is used for reference

2) Test Case Name- This is the name of the relevant test scenario

3) Feature/Module- This is the name of the parent module for the relevant test case.

4) Priority- This denotes the importance level of the relevant test case like below:

(a) High (Priority- P0) [It is related to the Functional Part]

(b) Medium (Priority- P1) [It is related to the Non-Functional Part (excluding usability)]

(c) Low (Priority- P2) [It is related to the Usability]

5) Test Suite Id- It is the id for the parent of the niche test cases or we can say this is the name of the batch in which there are more than one test case.

6) Test Set-up- This represents the required conditions to follow the above 5 steps and to start the test execution.

7) Test Environment- This represents the required software and hardware in order to run the test cases.

8) Test Procedure- This is the step by step procedure in order to run the current test case(s).

Test Plan Format

(1)Test Plan ID- It is a unique number or name for future reference.

(2)Introduction- It has description about the current project.

(3)Features or Modules- It has list of modules in the current project.

(4)Features to be tested- It has the number of modules which are to be tested in the current project.

(5)Features not to be tested-It has the number of modules which are not to be tested in the current project.

(6) Test Approach/Strategy- It is generally just an attachment provided by the Project Manager.

(7)Test Environment- It consists of the required hardware and software for testing of the current project.

(8)Entry Criteria- These are the criterias in order to start the test execution which includes:

(2)Introduction- It has description about the current project.

(3)Features or Modules- It has list of modules in the current project.

(4)Features to be tested- It has the number of modules which are to be tested in the current project.

(5)Features not to be tested-It has the number of modules which are not to be tested in the current project.

(6) Test Approach/Strategy- It is generally just an attachment provided by the Project Manager.

(7)Test Environment- It consists of the required hardware and software for testing of the current project.

(8)Entry Criteria- These are the criterias in order to start the test execution which includes:

- Test cases is prepared and reviewed

- Test environment is established

- Software under test has come from the developers

- Show stopper in SUT (Or Deadlock)

- Test Environment abandoned

- More defects in pending (Quality Gap)

- All Modules Tested

- Time exceeded

- All Major Defects closed

(11)Test Deliverables- These are the list of documents to be prepared by testers in testing. For example:

- Test Scenarios

- Test cases

- Automation Programs

- Test Logs

- Defect Reports

- Status Reports

(13)Responsibilities- This includes the work allocation to the selected testers in terms of modules or testing topics. It just represent 'Who to Test'.

(14)Schedule- It includes the Date and Time for the testing. It represents 'When to test'

(15)Risks and Assumptions- It consists of the lists of previously analysed risks and solutions to overcome them.

(16)Approvals- It includes the signature of the test lead and the project manager.

Click here to know all about test Test Plan

Click here to know all about test Test Plan

Click here to know about Test Initiation or Commencement

Click here to know about Test Design

Click here to know all about test Test Plan

Click here to know all about test Test PlanClick here to know about Test Initiation or Commencement

Click here to know about Test Design

Test Planning

After completion of the test strategy preparation, PM (Project Manager) can sign in a Test Lead. The test lead can study the test strategy document and can start preparation of the test plan document.

(a) Team Formation- Test lead can start test planning process with testing team formation. While formation of the team, test lead depends on the below factors:

|

| Test Plan |

(a) Team Formation- Test lead can start test planning process with testing team formation. While formation of the team, test lead depends on the below factors:

- Project Size (Number of Functionalities)

- Test Duration (Number of Working Days)

- Available Testers on bench

- Available resources in test environment

Case Study

|

| Team Formation |

(b) Identifying Tactical Risks- After completion of testing team formation ,test lead concentrates on risk identification which includes-

- Lack of Domain Knowledge to Testers

- Lack of Documentation

- Lack of Time

- Lack of Resources

- Delays is Delivery

- Lack of developer's seriousness

- Lack of Communication

(c) Preparation of Test Plan- After completion of testing team formation and risk analysis, test lead starts preparation of test plan document in IEEE (Institute of Electrical and Electronics Engineering) 829 format.

Click here to know about the 'Test Plan Format'

(d) Review Test Plan- After completion of test plan document preparation test lead conducts a review meeting along with project manager, business analyst, system analyst and selected testers for the current project. In this review meeting test lead performs the test changes in the test plan if needed (it depends upon the feedback)

Learn Next- Test Design

(d) Review Test Plan- After completion of test plan document preparation test lead conducts a review meeting along with project manager, business analyst, system analyst and selected testers for the current project. In this review meeting test lead performs the test changes in the test plan if needed (it depends upon the feedback)

Learn Next- Test Design

Test Initiation or Commencement

Software testing process starts when SRS is baselined. In software testing process, test initiation is the first stage. In this stage project manager or test manager category people prepare test strategy or test methodology document which specifies an approach to be followed by the testing team. There are three types of strategy in testing:

- Exhaustive Testing

- Planned Testing

- Ad-hoc Testing

From software testing principle, exhaustive testing is impossible. Due to this reason, test management concentrate on planned testing or ad-hoc testing methodologies. Ad-hoc testing is followable when testing team have some risks. When there are no risks testing team favors planned testing/ Formal testing/Optimal testing. From this planned testing, project manager or test manager prepare a test strategy document like below:

- Scope and Objective

- Business Issues

- Test Responsibilities in Matrix

- Roles and Responsibilities

- Status of Communication

- Test Automation and Testing tools

- Defect Reporting and Tracking

- Test Measurement and Matrix

- Test Management

- Risks and Assumptions

- Training Plan

Test Responsibilities in Matrix (TRM)- This matrix specifies the list of responsible testing topics in software testing.

Roles and Responsibilities- It consists of the jobs in the testing team and each job requirements like below:

Status and Communication- In every two jobs in testing team are co-ordinated via different channels. For example Personal Meetings, Offline Meetings, Online Chatting, Video Conferencing and internal communicator etc.

Test Automation and Testing Tools- In this need for test automation in current project and available testing tools in the company is mentioned.

Defect Reporting and Tracking- In this required negotiation channels in between developers and testers while reporting and tracking of defects is mentioned.

Test Measurement and Metric- Measurement is a basic unit and Metric is a compound unit. In order to estimate the testing process status, testers use a set of measurement and metric.

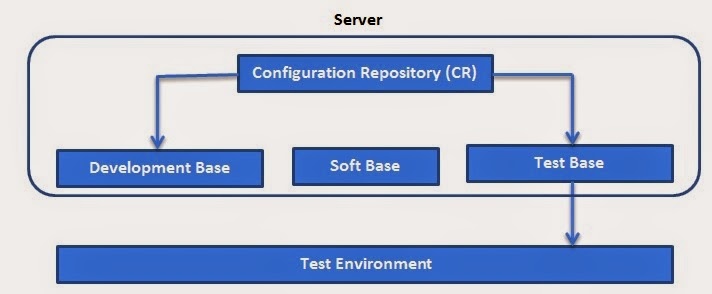

Test Management- Testing team need a sharable location to store all testing deliverables for future called as test base.

Risks and Assumptions- In this List of all risks (which might come in future) and assumptions (to overcome those risks) is mentioned.

Training Plan- Testing team need training on the customer requirements in current project. In this training, testers are trained by BA,SA and SME

Click Here to know about Test Planning

Click Here to know about Test Plan Format

Click here to know about Test Design

|

| Test Responsibilities in Matrix |

Roles and Responsibilities- It consists of the jobs in the testing team and each job requirements like below:

|

| Roles and Responsibilities |

Status and Communication- In every two jobs in testing team are co-ordinated via different channels. For example Personal Meetings, Offline Meetings, Online Chatting, Video Conferencing and internal communicator etc.

Test Automation and Testing Tools- In this need for test automation in current project and available testing tools in the company is mentioned.

Defect Reporting and Tracking- In this required negotiation channels in between developers and testers while reporting and tracking of defects is mentioned.

| Defect Tracking Team |

Test Measurement and Metric- Measurement is a basic unit and Metric is a compound unit. In order to estimate the testing process status, testers use a set of measurement and metric.

Test Management- Testing team need a sharable location to store all testing deliverables for future called as test base.

|

| Test Management |

Risks and Assumptions- In this List of all risks (which might come in future) and assumptions (to overcome those risks) is mentioned.

Training Plan- Testing team need training on the customer requirements in current project. In this training, testers are trained by BA,SA and SME

Training is optional to the testers if the testers have experience in the current project. The domain of the current project may be Banking, Insurance, Finance, Sales, Telecommunications, Health Care, eCommerce, e-learning etc.

Click Here to know about Test Planning

Click Here to know about Test Plan Format

Click here to know about Test Design

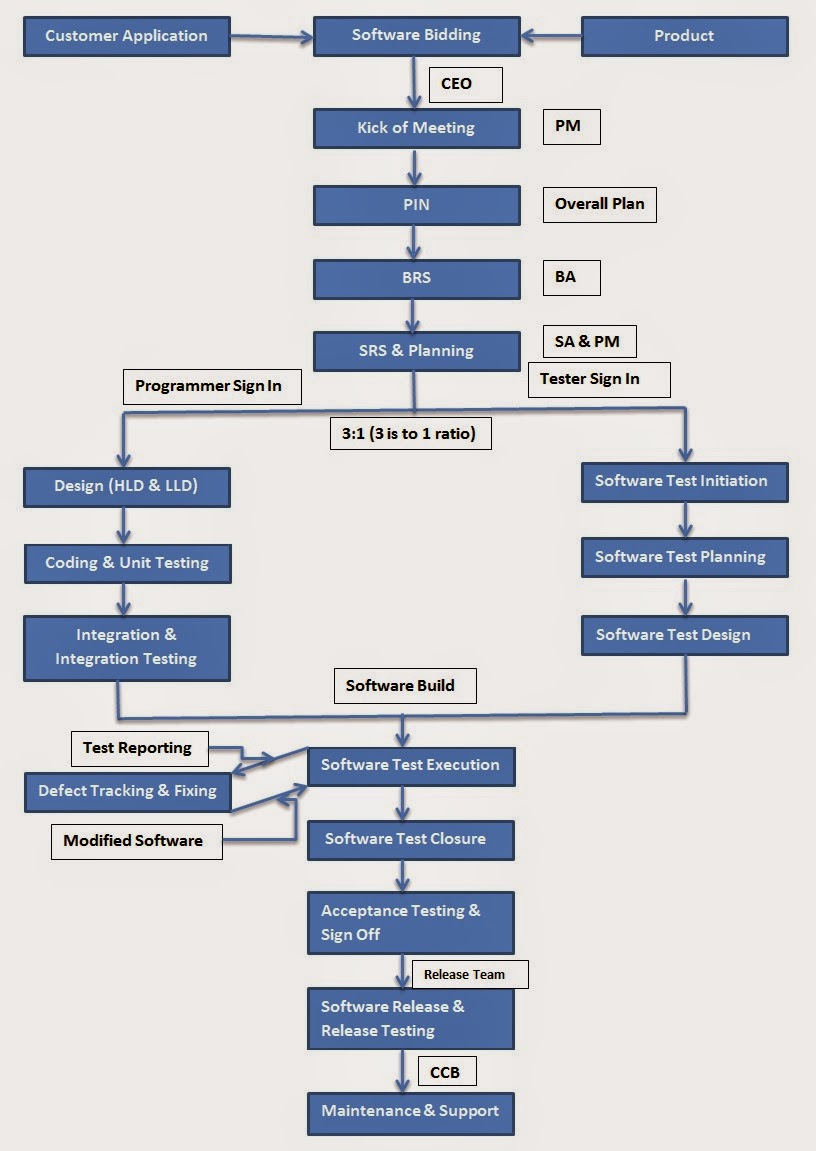

Software Testing Process

|

| Software Testing Process |

Integrating SDLC and Software Testing Process:

|

| Integrating SDLC and Software Testing Process |

- When SRS is baselined , project management recruit programmers and testers into current project.

- Project or product planning prepared by PM is more detailed. But testing team prepare separate test planning as well.

- Testing team start writing test cases (In order to start writing test cases testing team study the SRS)and after that they detect the defects after getting the build from the development team.

Learn about-

Review Techniques

Review Techniques are also called as document testing techniques. These techniques are used by BA (Business Analyst), SA (System Analyst) and designer in order to verify BRS (Business Request Specification), SRS(System Request Specification) and design documents (respectively). While reviewing they follow three techniques which are:

|

| Review Techniques |

- Walkthrough

- Inspection

- Peer Review

Walkthrough- It is the verification of a document from first to last for correctness and completeness.

Inspection- In this technique, a document is searched for a specific factor.

Peer Review- It is the comparison of two similar documents to estimate correctness and completeness.

The above review techniques are also called as static testing techniques.

The combination of White Box, Black Box, Yellow Box and Green Box testing techniques is called dynamic testing techniques.

Maintenance or Support

|

| Change Control Board |

During utilization of software, customer side people send software change request (SCR) to the companies. Project management manages the Change Control Board (CCB) to receive such requests. This CCB consists of developers and testers to perform the change in software.

|

| Change Control Board |

Enhancement(s)-

|

| Enhancements |

- Perform Changes

- Test Changes

- Release software patch to customer site

Now again the maintenance is again partitioned into two parts which are-

1) Enhancive Maintenance

2) Adaptive Maintenance

CCB conducts root cause analysis order to identify the areas to be change in software to correct-

- Perform Changes

- Test Changes

- Release patch to customer site

- Improve capability of both testers and developers(People Improvement) and process for upcoming project(s)(Process Improvement). This is called as corrective maintenance.

Subscribe to:

Posts (Atom)